Freedom! We all love, cherish, and go to great lengths to protect it. When social media became popular, it gave people the freedom to show THEIR ground realities to the world. It also gave the general public some power over what content is seen by the masses; not just the mainstream media, governments, and corporations. And that’s great. But since social media gave everyone on the planet a stage, it also gave birth to the tsunami of toxic content that’s present on the internet today.

Toxic content means anything on the internet that encompasses aggressive, rude, or degrading behavior, attitudes intended to affect others, and things like graphic media content, extremism, and dark humor that can severely harm your mental health.

In this post, we’ll look at the toxic side of the internet and discuss what you can do to protect yourself and your loved ones from its effects. So, let’s begin.

What is UGC?

Before moving further and discussing toxic content on the internet in-depth, let’s take a moment to understand what user-generated content (UGC) is and how it impacts the world.

UGC, sometimes known as UCC (user-created content), is any content—like texts, videos, images, and reviews—created by the public and not by corporate brands.

Social media platforms and internet forums are some of the biggest UGC promoters.

As you may already know, we’re shifting to web 3.0—a complete change in how we use the internet. Read my post, “The Future with the Internet: What is Web 3.0 and How Does it Concern You?” for more information.

This is similar to what happened after 2004, when the world saw a newer version of the internet—the web 2.0. It equipped people with an entirely new way of communication, which changed many things.

What Changed After UGC?

Although UGC advanced the way we communicate, the most critical change it made was that it took the monopoly over media creation away from corporates, governments, and media houses and gave it to the people.

This gave the masses the freedom to share their thoughts, communicate with a large audience, and influence others. And that’s super important because it allows people to see what’s happening around the world without any biased interference.

But when you give control of something important to this many people, there are bound to be consequences. And that’s what happened.

The Downside

Toxic content on the internet is one of the many downsides of modern technology. It affects your mental, psychological, and emotional health in a way that makes it really hard for you to dial back.

Here are some of the problems we see with UGC on social media.

NSFW Content

NSFW (i.e., “not safe for work”) is a type of internet material that one can only view in private, as it contains content that could be either offensive or inappropriate for the workplace.

This kind of content contains nudity, pornography, political incorrectness, profanity, slurs, graphic violence, or other potentially disturbing subject matter.

This kind of content is generally hidden in Google search results, only allowing access if you search using specific terms. But some social media sites will enable this type of content to be uploaded and even push it on the front page, based on your settings. These platforms include:

- 4Chan

- Ello

- And more…

Although sites like Facebook, Instagram, Pinterest, and Tumblr claim to be NSFW-free, there are groups and pages operating in the grey area when it comes to NSFW.

Now, why is this a problem?

Of course, if you’re an adult searching for and viewing NSFW content produced within the legal bounds, it may not be that big of a deal. But adults aren’t the only group there is on these sites. In fact, sites like Reddit, 4Chan, and Instagram are filled with minors.

So, this kind of content can be problematic.

Extremism

Extremist groups have been trolling the internet for decades. They’ve learned to temper their words and disguise their intentions to attract the masses, says Alexandra Evans, a policy researcher for RAND.

“There’s this idea that there’s a dark part of the internet, and if you just stay away from websites with a Nazi flag at the top, you can avoid this material,” she said. “What we found is that this dark internet, this racist internet, doesn’t exist. You can find this material on platforms that any average internet user might visit.”

Extremist content is available on all corners of the internet, and finding it doesn’t even require much effort.

Research studies suggest that some people are particularly vulnerable to radical and extremist content. And if their feed keeps showing them such content, their ideas and beliefs can change to match the views and beliefs of those whose posts they’re seeing.

Unmoderated Live Streams

Although sites like Facebook and Youtube have amazing policies on removing content uploaded on their sites, one thing that is not really in their control is what people do on their live streaming platforms.

For years we’ve seen examples of people doing devastating things live on the internet, making an everlasting impact on the viewers’ psyche. But to date, there has been no real solution to this problem.

Here’s just one example. Not so long ago, there was an ongoing meme war between two Youtube channels, “Pewdiepie” and “Tseries,” to be the first to complete 100 million subscribers. The internet was divided but in a healthy way. People were making memes, songs, and funny videos showing their support for their favorite creator. But something happened in March 2019 that shook the whole internet.

In one live stream, a shooter opened fire on congregants in two mosques in Christchurch, New Zealand, killing at least 49 people, immediately after shouting, “Subscribe to Pewdiepie.”

And not just that, there are many examples of people doing devastating things on live streams and successfully gathering many viewers to see their crimes.

Learn more about it in this NBC article entitled, “Who Is Responsible for Stopping Live-Streamed Crimes?”

Hate Speech

There is no shortage of hate speech on social media. In fact, experts say that violence attributed to online hate speech has increased worldwide. Societies confronting the trend must deal with questions of free speech and censorship on widely used tech platforms.

The same technology that connects the world and allows people in need to reach others who can help, acts as an open platform for hate groups seeking to recruit and organize.

Besides that, it also allows conspiracy peddlers to reach audiences far broader than their core readership.

And this has some serious consequences.Learn more about hate speech on social media and its consequences in this Council on Foreign Relations article.

Cyber Bullying

Ranging from detrimental jokes with harmless intentions to full-blown attacks on an individual’s mental health, the world of cyberbullying is huge.

This is an ongoing problem in social media that has caused hundreds of people to take unthinkable steps.

Although most cyberbullying happens privately via messages, there have been instances where cyberbullies have humiliated their victims in public using posts, videos, and comments.

Learn more about cyberbullying and how to stop it in my post, “Cyberbullying: What It Is & How to Keep Your Kids Away from Cyberbullies.”

What Are the Social Media Giants Doing to Stop This?

There is toxic content on the internet. It’s undeniable. But, it’s not that social media platforms don’t take action. They’re doing many things to “make their platforms a safe space for their users.”

Have a look.

Meta

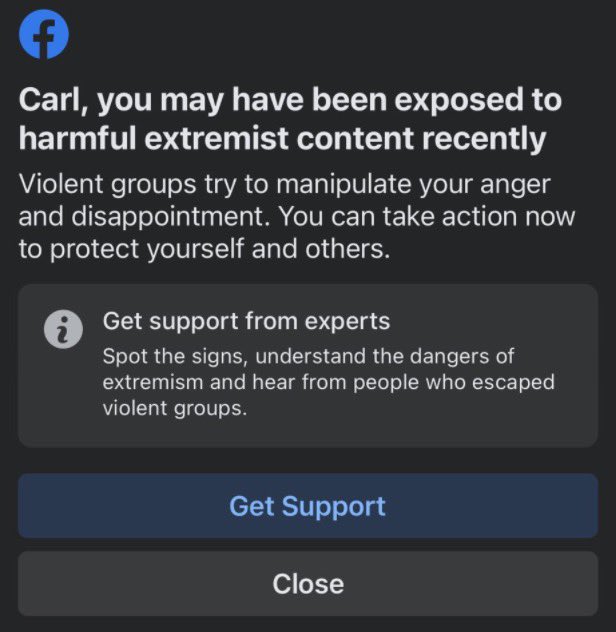

In July 2021, CNN reported that Facebook had been testing new prompts to reach users who may be vulnerable to “becoming an extremist.”

Per Facebook, these in-app messages are a test to direct such vulnerable users to resources to combat extremism.

Although Facebook didn’t announce this publicly, several Twitter users reported spotting these messages. One message read, “Are you concerned that someone you know is becoming an extremist?”

Besides this, there was also another prompt that warned users who may have encountered extremist content on the platform. “Violent groups try to manipulate your anger and disappointment,” it says. “You can take action now to protect yourself and others.”

Facebook spokesperson Andy Stone said the messages are “part of our ongoing Redirect Initiative work.”

They took this initiative as a part of a broader effort to fight extremism on the platform in collaboration with groups like Life After Hate, which helps people leave extremist groups.

Similarly, Meta announced in January 2022 that they’ll rank harmful Instagram posts lower on the feed, so the users don’t encounter those posts.

Besides that, Meta also uses a robust algorithm to identify and remove content that could be harmful to its users. And, of course, they also perform a detailed review of posts flagged by users.

TikTok

TikTok rolled out the technology in July 2021 that automatically detects and removes content violating their policies on minor safety, violent and graphic content, illegal activities, adult nudity, and sexual activities.

They use automation for these kinds of content. Per TikTok, “these are the areas where its technology has the highest degree of accuracy.”

Besides that, the company also employs human content moderators on its safety team to review the content their users report.

In October 2021, TikTok claimed that it removed 81,518,334 videos for violating its community guidelines or terms of service in a single quarter. But the fact is that it totals less than 1% of the 8.1 billion+ videos posted on the platform.

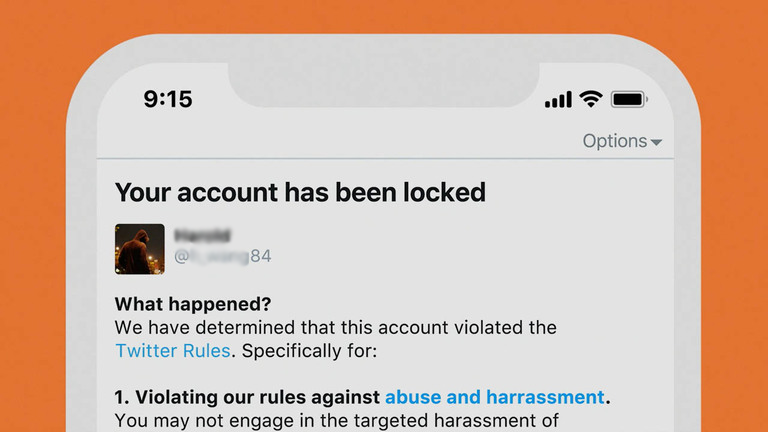

Content removal is a bit different with Twitter, as they’re one of the biggest supporters of free speech. Here’s what their policy says about offensive content.

“Twitter is a social broadcast network that enables people and organizations to publicly share brief messages instantly around the world. This brings a variety of people with different voices, ideas, and perspectives. People are allowed to post content, including potentially inflammatory content, as long as they’re not violating the Twitter Rules. It’s important to know that Twitter does not screen content or remove potentially offensive content.”

But this doesn’t mean there’s no moderation on the site. In their sensitive media policy, they say:

“You may not post media that is excessively gory or share violent or adult content within live video or in profile header, or List banner images. Media depicting sexual violence and/or assault is also not permitted.”

And Twitter also allows sexual content on its platform. The only requirement is that the videos and images should be consensual and marked as sensitive while uploading. Here’s what they say in their non-consensual nudity policy:

“You may not post or share intimate photos or videos of someone that were produced or distributed without their consent. Pornography and other forms of consensually produced adult content are allowed on Twitter, provided that this media is marked as sensitive.”

Moderation Alone Won’t Fix the Problem: MIT

“The approach [current moderation strategies] clearly has problems: harassment, misinformation about topics like public health, and false descriptions of legitimate elections run rampant. But even if content moderation were implemented perfectly, it would still miss a whole host of issues that are often portrayed as moderation problems but really are not,” say Nathaniel Lubin and Thomas Gilbert in an article on the MIT Technology Review website.

The article emphasizes how social media algorithms prioritize engagement and watch time over the safety of their users. And this often leads to the amplification of extreme content and the creation of filter bubbles, say the authors.

In turn, this has perpetuated divisive ideologies and hindered constructive dialogue on the internet. So, we can clearly see that as advanced as content moderation is on social media, it has not been enough to tackle the deeper problems stemming from the design and functioning of these platforms.

Lubin and Gilbert propose several solutions in this article, like:

- Creating alternative platforms with different design principles

- Establishing clear regulations and guidelines for social media companies

- Fostering digital literacy and critical thinking skills among users

Do you agree?

Why is Gen Z Leaving Social Media?

The Pew Research Center’s December 2022 report said that since 2019, major social media platforms have seen a significant decrease in Gen Z users. Why?

“The social hierarchies created by decades of public ‘like’ counts, and the noise level generated by clickbait posts and engagement lures, have worn on Gen Z,” says Axios, an American news website. “And constant pivots by social media giants have eroded younger users’ trust.”

Besides that, several stories have come to light causing users to rethink their social media use.

Because of this, a considerable portion of the Gen Z population has shifted to an array of smaller apps, each of which serves a distinct function. For example,

- Twitch for gaming and live-streaming

- Discord for private conversations

- BeReal for spontaneous photo updates

- Or Poparazzi for candid photos of friends

So, do you think this is the right move? Should you partake in this mass exodus from social media giants’ products?

Well, I won’t try and stop you if that’s what you want. But should you wish to stay, you need to make some serious changes in how you use social media, so you can enjoy its benefits without being subjected to the risks that tag along. More on that later.

What Can You Do?

Although there are strong policies and moderation, toxic content on the internet is inevitable. It slips past the eagle-eyed mods because the amount of content published on the internet is just too huge.

And when you expose yourself to this kind of content, it affects your mental, psychological and emotional health.

But this doesn’t mean there’s nothing that you can do. Here are some of the ways you can protect yourself from toxic content on the internet.

Report Content and Block the Account Responsible

If you encounter inappropriate content on social media, most platforms give you an option to report the content. And to ensure that you don’t see it again, you can block accounts that upload such content.

You can simply Google “how to report content on [your specific social media platform].” Or “how to block an account on [your specific social media platform]” and follow the steps given.

Besides that, make sure to unfollow accounts that show disturbing content or content that makes you feel down.

Don’t Engage with Online Extremism

Extremist content is everywhere on social media. And even if you have a different view when you look at or comment on it, any kind of engagement tells the algorithm that you like such content.

Soon, your homepage will be filled with content showing extremism capable of changing your thoughts, ideas, and beliefs. So, don’t engage with extremist content, not even to “show them.”

Add NSFW Filters

Although sites like Reddit and Twitter have NSFW filters, most platforms don’t. So, to ensure that you or someone younger in your home doesn’t encounter NSFW material, you can use apps and web extensions.

Simply search “Block Inappropriate Content on [your specific browser or device]” in Google and follow the instructions.

Moderate Your Child’s Tech Use

And finally, make sure that you’re moderating your child’s tech use. You don’t have to “watch” their every move. Just give their devices a scan once in a while to check for anything that shouldn’t be there.

Final Thoughts

Indeed, there’s no shortage of toxic content on social media. But this doesn’t mean that social media in itself is bad. The kind of effect it has on your physical, mental, psychological, and emotional being depends on how you use it.

And it’s not just social media. Technology, in general, is harmful if you misuse it.

But there’s a way you can continue enjoying the benefits that technology brings without being subjected to the health effects that tag along. And that’s by building a healthier relationship with it.

Check out “The Healthier Tech Podcast,” where experts from different industries discuss how to make technology safer and much healthier for you and your loved ones.